AI Basics — Where Every Journey Begins

No jargon, no prerequisites. Just clear, friendly explanations of how artificial intelligence really works — and why it matters to you.

The Foundation

What is Artificial Intelligence?

Artificial Intelligence (AI) is the ability of a computer system to perform tasks that normally require human intelligence — things like understanding language, recognizing faces, making decisions, and solving problems.

Think of it like this: when you teach a child to recognize a cat by showing them many pictures of cats, eventually they can spot a cat they've never seen before. AI learns in a similar way — through exposure to vast amounts of data, it develops the ability to recognize patterns and make predictions.

"Artificial Intelligence is not about creating robots that think like humans — it's about building software that learns from experience to solve specific problems better over time."

Unlike traditional software, which follows strict rules set by programmers ("if X, then do Y"), AI systems learn their own rules from data. This is why they can handle messy, complex real-world situations that rule-based programs struggle with.

AI already affects your daily life more than you realize — from the recommendations on Netflix and Spotify, to spam filters in your email, to the voice assistant on your phone. It's not science fiction; it's already woven into the fabric of modern life.

Core Knowledge

4 Key AI Concepts Every Beginner Should Know

These four concepts form the foundation of modern AI. Understanding them will help everything else make sense.

Machine Learning

Machine Learning (ML) is the most common type of AI today. Instead of being programmed with specific rules, ML systems are trained on data — they learn by example. Feed a system thousands of emails labeled "spam" or "not spam" and it learns to classify future emails on its own.

Key insight: ML gets better the more data it sees. The system improves its predictions over time through experience, just like a human would.

Deep Learning

Deep Learning is a powerful subset of Machine Learning that uses artificial neural networks with many layers (hence "deep"). It's what powers the most impressive modern AI feats — image recognition, speech-to-text, and large language models like ChatGPT.

Key insight: Deep learning requires large amounts of data and computing power, but it can learn incredibly complex patterns that older ML methods couldn't.

Neural Networks

Neural networks are the architecture that makes deep learning possible. They're loosely inspired by the human brain — made up of layers of interconnected "neurons" (mathematical functions) that process information. Data flows through the layers, transforming at each step until an output is produced.

Key insight: You don't need to understand the math to use neural network-based tools, but knowing they exist helps you understand why AI systems behave the way they do.

Natural Language Processing

Natural Language Processing (NLP) is the branch of AI focused on enabling computers to understand, interpret, and generate human language. It's what powers chatbots, translation services, sentiment analysis, and tools like ChatGPT and Claude.

Key insight: NLP has advanced dramatically in recent years. Modern large language models (LLMs) can write essays, answer questions, summarize documents, and even write code.

Visual Explainer

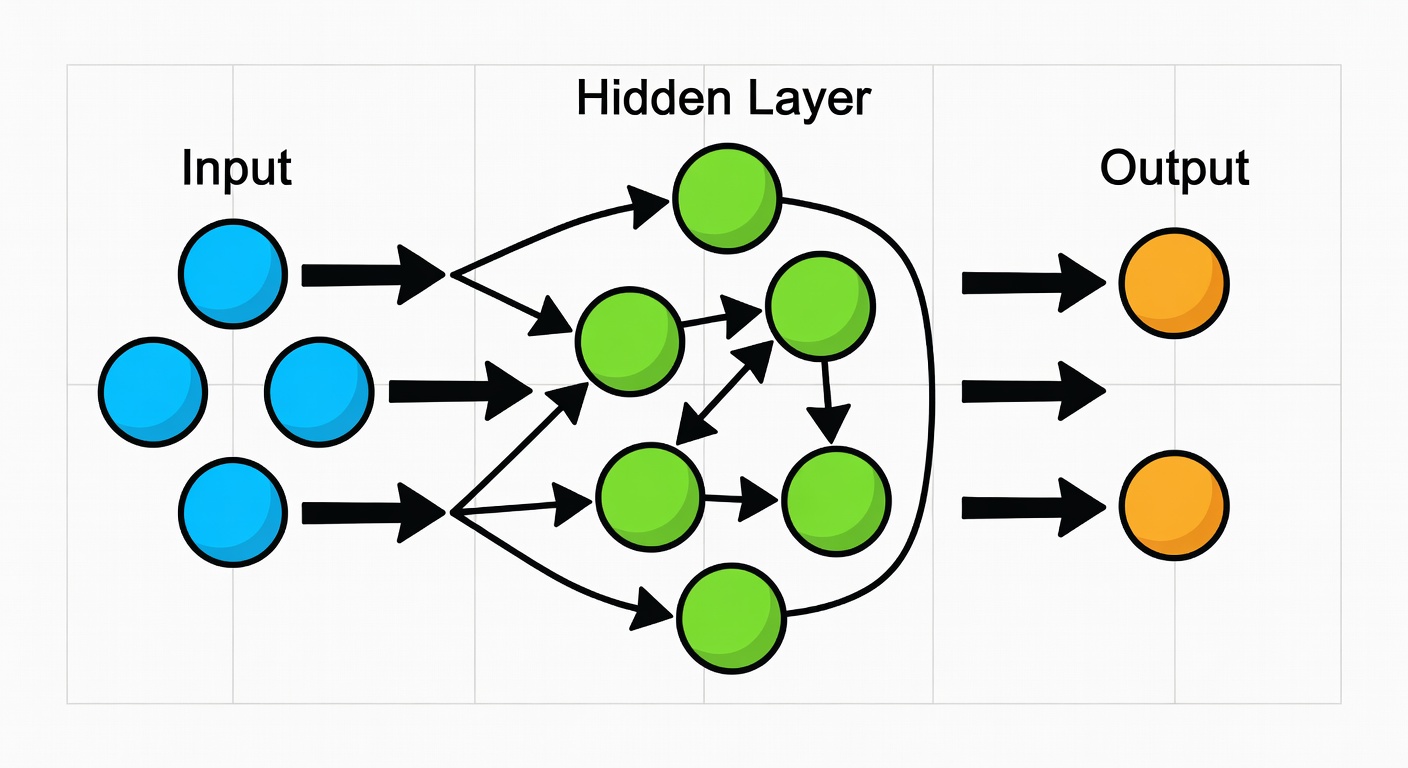

How Neural Networks Work

A neural network learns by adjusting thousands of internal weights until it can reliably map inputs to correct outputs.

Input Layer — Receiving Data

Raw data enters the neural network through the input layer. This could be pixel values from an image, words in a sentence, or numbers from a spreadsheet. Each input becomes a signal for the next layer.

Hidden Layers — Finding Patterns

The magic happens in the hidden layers. Each neuron receives signals, applies a mathematical transformation, and passes the result forward. Early layers detect simple features (edges, shapes); deeper layers detect complex patterns (faces, objects).

Output Layer — Making Predictions

The final layer produces the network's answer — whether that's "this is a cat", a translated sentence, or a predicted stock price. The output is the result of all the transformations through the network.

Backpropagation — Learning from Mistakes

When the network gets something wrong, an algorithm called backpropagation adjusts the weights throughout the network to reduce the error. Repeat this millions of times and the network becomes highly accurate.

Training vs. Inference

Training is the learning process (usually done once using massive datasets). Inference is when the trained model makes predictions in real-time. When you use ChatGPT, you're in the inference phase — the model has already been trained.

Key Difference

AI vs. Traditional Programming

Understanding this distinction is crucial for grasping why AI is such a paradigm shift in software development.

| Aspect | Traditional Programming | AI / Machine Learning |

|---|---|---|

| How rules are defined | Programmer writes explicit rules (if-then logic) | System learns rules automatically from data |

| Handling new situations | Fails or gives wrong answer if not explicitly programmed | Generalizes from training to handle unseen situations |

| Performance over time | Fixed — doesn't improve without new code | Improves with more data and training |

| Best suited for | Structured tasks with clear, unchanging rules | Pattern recognition, prediction, complex decisions |

| Examples | Calculators, spreadsheet formulas, database queries | Face recognition, spam filters, language translation |

| Transparency | ✓ Fully explainable — you can read the code | Sometimes opaque — "black box" problem in deep learning |

| Data requirements | ✓ Minimal — logic is encoded in code | Requires large, high-quality labeled datasets |

| Development speed | Fast for simple problems, slow for complex ones | Slow setup, but scales to highly complex tasks |

Classification

Types of AI

AI is often categorized by its capabilities. Here's how researchers think about the spectrum from today's tools to theoretical future systems.

Narrow AI (Weak AI)

All current AI systems are Narrow AI — they're designed and trained to do one specific type of task extremely well, but nothing outside that scope. A chess AI can't write emails; a translation AI can't recognize faces.

General AI (Strong AI / AGI)

Artificial General Intelligence refers to a hypothetical AI system that can perform any intellectual task a human can — learning, reasoning, and adapting across entirely different domains. It would be like a single AI that's simultaneously good at medicine, art, engineering, and cooking.

Super AI (Artificial Superintelligence)

Superintelligent AI would surpass human intelligence in every domain — scientific creativity, social intelligence, general wisdom, and more. This is purely theoretical at present and is a common subject of philosophical debate about AI safety and the future of humanity.

Clearing the Air

Common AI Myths Busted

Misconceptions about AI are widespread — from sci-fi fears to over-hyped promises. Let's set the record straight.

MYTH AI will take all our jobs and make humans obsolete

AI will change jobs significantly, but historical evidence from every previous wave of automation (from the printing press to spreadsheets) shows that technology creates more jobs than it destroys — just different ones. AI excels at repetitive, data-heavy tasks but struggles with creativity, empathy, and novel problem-solving. The World Economic Forum predicts AI will create 97 million new roles by 2025 while displacing 85 million — a net positive. The key is adapting your skills.

MYTH You need to know math and coding to understand AI

To use AI tools effectively, you need zero coding or math skills. To understand how AI works conceptually, you need only curiosity and a willingness to learn. This entire platform is built on that premise. Advanced AI research and engineering do require math (linear algebra, calculus, statistics) and coding, but understanding, using, and even building AI-powered applications with modern no-code tools is entirely accessible to everyone.

MYTH AI is always objective and unbiased

AI systems learn from data created by humans — and humans have biases. When training data reflects historical inequalities (e.g., facial recognition systems trained mostly on lighter-skinned faces), AI systems can perpetuate or amplify those biases. There are well-documented cases of AI showing bias in hiring, lending, criminal justice, and healthcare. Addressing AI bias is one of the most important challenges in the field today, and why AI Ethics is a critical area of study.

MYTH AI understands and thinks the way humans do

Current AI systems — no matter how impressive — do not "understand" or "think." They are extraordinarily sophisticated pattern-matchers. When ChatGPT answers a question, it's predicting the most statistically likely next word based on its training data — not reasoning or understanding in any meaningful sense. AI has no consciousness, emotions, genuine comprehension, or self-awareness. It's a very powerful tool that simulates understanding, but the distinction matters enormously for how we use and regulate these systems.

MYTH AI is only for big tech companies and specialists

The democratization of AI is one of the most exciting developments of the past five years. Today, a freelance graphic designer can use Midjourney, a small business owner can deploy a customer service chatbot with no code, a teacher can use AI to personalize lesson plans, and a writer can use AI assistants for research. Most powerful AI tools are either free or very affordable. The barriers to entry have never been lower — which is exactly why learning AI basics now gives you such a significant advantage.

Quick Reference

Quick AI Glossary

8 essential AI terms every beginner should know. See the full glossary for 200+ terms.

- Algorithm

- A set of instructions or rules that a computer follows to solve a problem or complete a task. In AI, algorithms define how a model learns from data.

- Training Data

- The dataset used to teach an AI model. The model analyzes this data to learn patterns. The quality and quantity of training data directly affects model performance.

- Model

- The output of training an AI system — the "learned" version of the algorithm that can now make predictions or decisions on new, unseen data.

- Parameters

- The internal variables of a neural network that are adjusted during training. Large language models like GPT-4 have hundreds of billions of parameters.

- Inference

- The process of using a trained AI model to make predictions on new data. When you ask ChatGPT a question, it runs inference on your input.

- Large Language Model (LLM)

- A type of deep learning model trained on vast text datasets to understand and generate human language. Examples include GPT-4, Claude, and Gemini.

- Prompt

- The input text you give to an AI language model. How you write your prompt significantly affects the quality of the AI's response — this is called "prompt engineering."

- Hallucination

- When an AI confidently states something that is factually incorrect or completely made up. A major limitation of current language models that users must be aware of.